LLM

Transparency is central to us at Intric. When you interact with an assistant in our platform, specific processes are in place to ensure your privacy and data sovereignty.

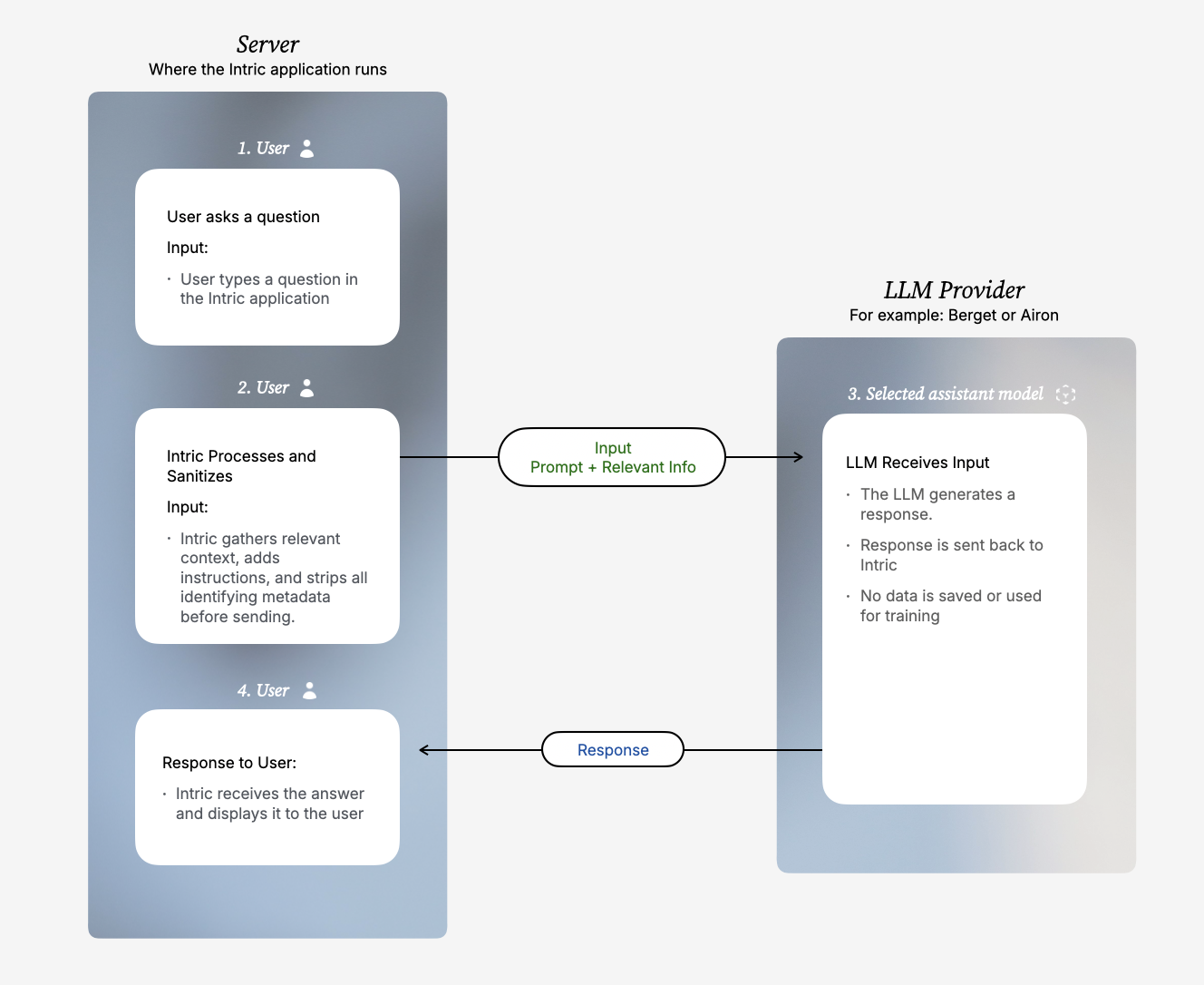

The process from your question to a finished answer occurs through a secure interaction between the Intric platform (where your data is managed) and your selected LLM provider (for example, Berget or Airon).

Step-by-step: How your data is handled

Step 1 — User

You ask a question (Input).

Input: Your prompt, chat history, and any attached files.

Step 2 — Intric (Server)

Intric processes the request before anything is sent to the LLM.

Action: Intric retrieves relevant background information to help answer your query and adds necessary system instructions. We send exactly what you put into the prompt, but Intric strictly removes all metadata—meaning no information about who sent it or which organization it belongs to is ever attached.

Step 3 — Selected assistant model (LLM Provider)

The LLM receives the request and generates an answer.

Input: The prompt content (your exact question + relevant information from Knowledge or Tools). Action: The LLM creates a response based entirely on the information provided. It has zero context about the identity of the user or the organization. Immediately after the response is sent back, the LLM provider deletes both your input and the generated response.

Step 4 — User

You receive the final result.

Action: Intric receives the result from the LLM and displays the answer back to you in the user interface.

Data sharing and privacy

To protect your and your organization’s privacy, we apply the principle of data minimization. This means the LLM provider only gets access to the content absolutely necessary to perform the task—no user identity ever leaves your infrastructure.

In the table below, you can see exactly what data is sent to the LLM and what is kept completely private.

| This is sent to the LLM | This is NOT sent to the LLM |

|---|---|

| The user's exact prompt/question | User ID, username, or name |

| System prompt / instructions | Email addresses or IP addresses |

| Retrieved relevant information from Knowledge or Tools that the assistant can access | Authentication tokens |

| Database IDs |

Summary

When you communicate with an LLM through Intric, your identity remains completely private. All user metadata is handled locally and never leaves your control. The chosen LLM provider only ever sees the isolated text required to generate a useful response. Furthermore, Intric have strict zero data retention clauses in all our contracts with LLM providers. This guarantees that your prompts and the generated responses are never saved by the provider, nor are they ever used to train their AI models.